Metacognition can be considered a synonym for reflection in applied learning theory. However, metacognition is a very complex phenomenon. It refers to the cognitive control and monitoring of all sorts of cognitive processes like perception, action, memory, reasoning or emoting.

A recent #DPTstudent tweet chat dealt with the concept of metacognition broadly (list of links), but more specifically discussed the need for critical thinking in education and clinical practice. Most agreed on the dire need for critical thinking skills. But, many #DPTstudents felt they had no conceptual construct on how to develop, assess, and continually evolve thinking skills in a formal, structured manner. Many tweeted they had never been exposed to the concept of metacognition nor the specifics of critical thinking. Although, most stated that “critical thinking” and “clinical decision making” were commonly referenced.

What’s more important than improving mental skill sets?

Thinking is the foundation of conscious analysis. Yet, even with a keen focus on assessing and improving our thinking capacities, unconscious processes influence not only how and why we think, but what decisions we make, both in and out of the clinic. We are humans. Humans with bias minds. Brains that, by default, rationalize not think rationally…

Everyone thinks; it is our nature to do so. But much of our thinking, left to itself, is biased, distorted, partial, uninformed or down-right prejudiced. Yet the quality of our life and that of what we produce, make, or build depends precisely on the quality of our thought. Shoddy thinking is costly, both in money and in quality of life. Excellence in thought, however, must be systematically cultivated. – Via CriticalThinking.org

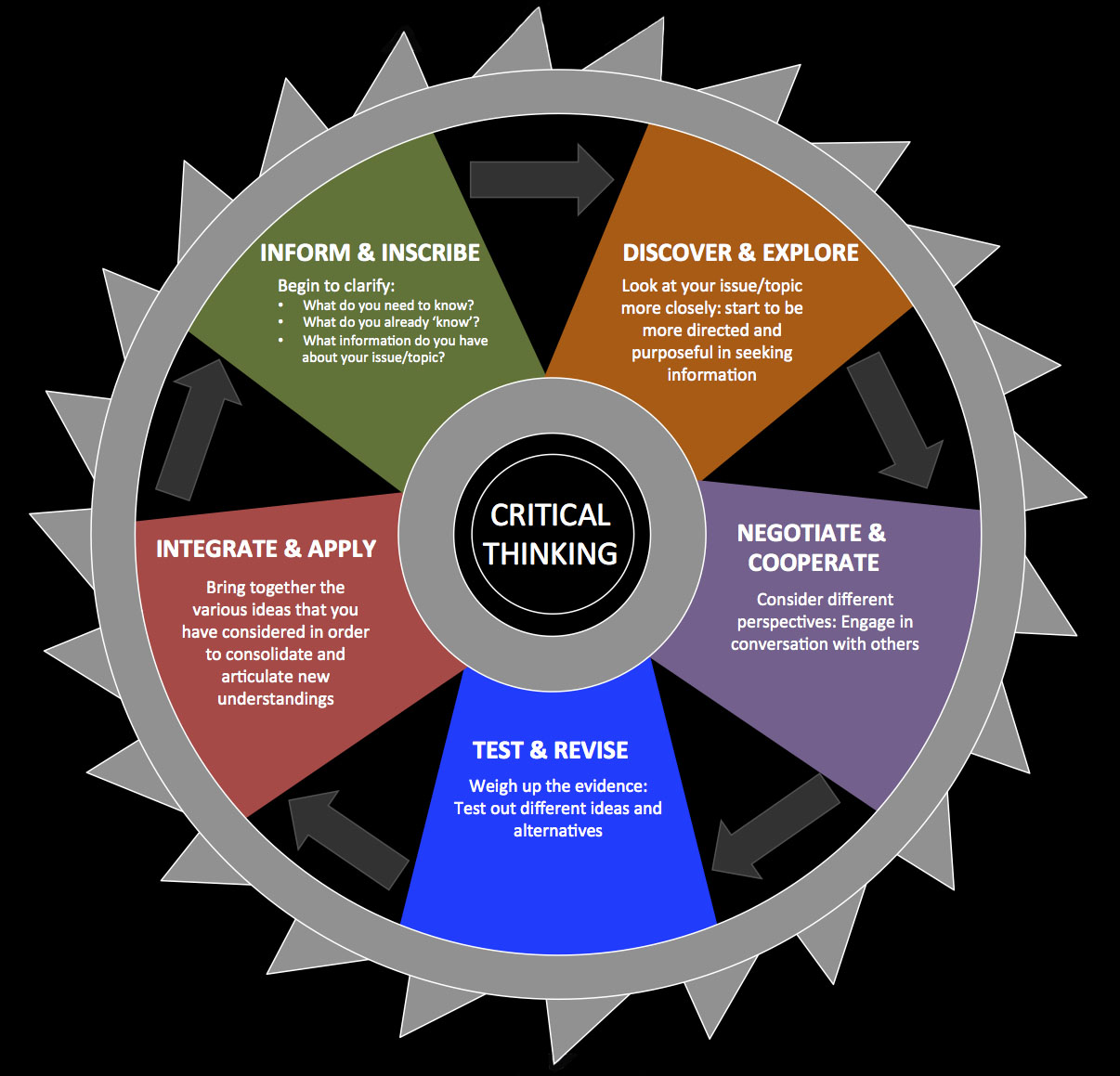

Need a Model

Mary Derrick observed previously in her post that the words critical thinking and clinical decision making are often referenced without much deeper discussion as to what these two concepts entail or how to develop them. Students agree that critical thinking and sound clinical decision making are stressed in their pre-professional education. But, all levels of education appear to grossly lack formalized courses and structured approaches. The words are presented, but rarely systematically defined. The actual skills rarely practiced and subsequently refined. Students thus lack not only exposure and didactic knowledge of metacognition, critical thinking, and decision making, but also lack experience evolving these mental skills.

Need to teach how to think

Alan Besselink argued a scientific inquiry model to patient care is, from his view, “the one approach.”

There is, in fact, one approach that provides a foundation for ALL treatment approaches: sound, science-based clinical reasoning and principles of assessment, combined with some sound logic and critical thinking.

One approach to all patients requires an ability to gather relevant information given the context of the patient scenario. This occurs via the clinician’s ability to ask the appropriate questions utilizing appropriate communication strategies. Sound critical thinking requires the clinician to hold their own reasoning processes to scrutiny in an attempt to minimize confirmation bias if at all possible. It also requires the clinician to have a firm regard for the nature of “normal” and the statistical variations that occur while adapting to the demands of life on planet earth.

Philosophically, I agree. It appears the question “what works?” has been over emphasized at the potential sacrifice of questions such as “how does this work?” “why do we this?” and “why do we think this?”

Unfortunately, the construct of evidence based practice assumes the user applying the EBP model is well versed in not only research appraisal, but critical thinking. The structure of evidence based practice overly relies on outcomes studies. It lacks a built in process for integration of other sources of knowledge as well as the applicable question of “does this work as theoretical proposed?” The evidence hierarchy is structured and concerned with efficacy and effectiveness only. Many will be quick to point out that from a scientific rigor standpoint the evidence hierarchy is structured as such, because other forms of inquiry (basic physiology, animal models, case reports, case series, cohort studies, etc) can not truly answer questions “what works?” without significant bias. Robust conclusions on causation can not be made via less controlled experiments. And this, of course, is true. In terms of assessing effectiveness and efficacy in isolation, the evidence hierarchy is appropriately structured.

But, the evidence hierarchy does not consider knowledge from other fields nor basic science, and thus by structure explicitly ignores plausibility in both theory and practice. To be fair, plausibility does not necessarily support efficacy nor effectiveness. So, it is still imperative, and absolutely necessary, to learn the methodology of clinical science. Understanding how the design of investigations affects the questions they can truly answer precedes appropriate assessment and conclusion. Limits to the conclusions that can be drawn are thus explicitly addressed.

Need the Why

Because of the focus on evidence based practice, which inherently (overly?) values randomized control trials and outcomes studies over basic science knowledge and prior plausibility, students continue to learn interventions and techniques while routinely asking “what works?” Questions of “how did I decide what works?” “why do I think this works” and “what else could explain this effect?” also need to be commonly addressed in the classroom, clinic, and research. Such questions require formalized critical thought processes and skills.

These questions are especially applicable to the profession of physical therapy as many of the interventions have questionable, or at least variable, theoretical mechanistic basis in conjunction with broad ranging explanatory models. This is true regardless of effectiveness or efficacy. In fact, it is a separate issue. Physical therapy practice is prone to the observation of effect followed by a theoretical construct (story) that attempts to explain the effect. A focus on outcomes based research perpetuates these theoretical constructs even if the plausibility of the explanatory model is unlikely. In short, while our interventions may work, on the whole we are not quite sure why. @JasonSilvernail‘s post EBP, Deep Models, and Scientific Reasoning is a must read on this topic.

The profession suffers from confirmation bias in regards to the constructs guiding the understanding of intervention effects. In addition, most, if not all, interventions physical therapists utilize will have a variety of non-specific effects. These two issues alone highlight the need for critical thought in order to ensure that our theoretical models, guiding constructs, and clinical processes evolve appropriately. And, further, to facilitate appropriate interpretation of outcomes studies.

It is not “what works?” vs. “why does this work?” Instead, a focus on integrating outcomes studies into the knowledge and research of why and how certain interventions may yield results is needed. This requires broadening our “evidence” lens to include physiology, neuroscience, and psychology as foundational constructs in education and clinical care. Further, research agendas focused on mechanistic based investigations are important to evolving our explanatory models. Education, research, and ultimately clinical care require both approaches. Interpretation, integration, and application of research findings, be they outcomes or mechanistic, necessitates robust cognitive skills. But, do we formally teach these concepts? Do we formally practice the mental skills?

So, now what?

There appears to be an obvious need, and obvious value, to learning how to think. But, that is just the start. The necessity of learning to think about thinking is required to improve the specific skill of critical thinking. The understanding and application of evidence based practice needs more robust analysis. Growth of critical thinking, metacognition, and an evolution of evidenced based to science based practice produces the foundation for strong clinical decision making. The call for evidence based medicine to evolve to science based medicine focuses on ensuring clinicians interpret outcomes studies more completely. It appears to put strong emphasis on increased critical thinking and knowledge integration.

Does Evidence Based Medicine undervalue basic science and over value Randomized Control Trials?

A difference between Sackett’s definition [Evidence Based Practice] and ours [Science Based Medicine] is that by “current best evidence” Sackett means the results of RCTs…A related issue is the definition of “science.” In common use the word has at least three, distinct meanings:

1. The scientific pursuit, including the collective institutions and individuals who “do” science;

2. The scientific method;

3. The body of knowledge that has emerged from that pursuit and method (I’ve called this “established knowledge”; Dr. Gorski has called it “settled science”).

I will argue that when EBM practitioners use the word “science,” they are overwhelmingly referring to a small subset of the second definition: RCTs conceived and interpreted by frequentist statistics. We at SBM use “science” to mean both definitions 2 and 3, as the phrase “cumulative scientific knowledge from all relevant disciplines” should make clear (by jennifer). That is the important distinction between SBM and EBM. “Settled science” refutes many highly implausible medical claims—that’s why they can be judged highly implausible. EBM, as we’ve shown and will show again here, mostly fails to acknowledge this fact.

What to do?

1. Learn how humans think by default: Biased

2. Learn the common tricks and shortcuts our minds make and take

3. Understand logical fallacies, cognitive biases, and the mechanics of disagreement

4. Meta-cognate: Think about your own thinking with new knowledge

5. Find a mentor or partner to critique your thought processes: Prove yourself wrong

6. Critique thought processes, lines of reasoning, and arguments formally and informally

7. Debate and discuss using a formalized structure

8. Think, reflect, question, and assess

9. Discuss & Disagree

10. Repeat

Science Based Practice…

1. Foundations in basic science: chemistry, physics, physiology, mathematics

2. Prior plausibility: “grand claims require grand evidence”

3. Research from other relevant disciplines from physics to psychology

4. Mechanics of science: research design and statistics

5. Evidence Based (outcomes) Hierarchy

Questions lead, naturally, to more questions. Inquiry breads more inquiry. Disagreement forms the foundation of debate. And thus, Eric Robertson advocates for embracing ignorance

Ignorance is not an end point. It’s not a static state. Ignorance isn’t permanent. Instead it’s the tool that enables one to learn. Ignorance is the spark that ignites scholarly inquiry.

Ignorance: the secret weapon of the expert.

Growth is rarely comfortable, but it’s necessary. And, that’s a lot to think about….

Resources

A Practical Guide to Critical Thinking

CriticalThinking.org

Logical Fallacies

Critical Thinking Structure

List of Fallacies

List of Cognitive Biases

Science Based Medicine

Clinical Decision Making Research (via Scott Morrison)

Clinical Decision Making Model

Thinking about Thinking: Metacognition Stanford University School of Education

Occam’s Razor

The PT Podcast: Science Series

Understanding Science via Tony Ingram of BBoyScience

I don’t get paid enough to think this hard by @RogerKerry1 (his blog is fantastic)

Evidence Based Medicine Is Broken

An interesting editorial…

Hi Kyle,

And thanks for another well-considered and stimulating post. So, it seems like you address the broad areas of metacognition and critical thinking and use the complexities and uncertainties of EBP as a tool to highlight to need for greater formal attention towards thinking. In many ways, this is not overly unique, e.g. some of the early manual therapists were continually skeptical about the knowledge sources they had access to in a pre-‘EBP’ area, and continually called for deep and critical thinking. However, what you have done is bring a ‘lost’ conversation back into the modern world. By doing this you have highlighted fresh and important challenges to the profession.

As you could probably guess, I would agree with practically everything here, and am continually worried that the EBP model we have so eagerly adopted is insufficient to make the claims it does (this is the subject area of my PhD). You have stated that there should be an evolution from EBP/M to SBM, and I really like the way you have framed this statement – i.e. as a profession we don’t really seem to understand what science is (develop theories to support outcomes etc, ‘reverse science’). There are, however, tempting arguments against this move from EBM proponents who continually report how we have been misled by scientific mechanisms and mechanistic reasoning in the past, and how RCTs have jumped in to ‘save the day’ (e.g. http://jrs.sagepub.com/content/103/11/433.long ).

I think that what you are saying is that ‘better’ critical thinking could prevent errors related to bias and mis-interpretation of data, and thought. Again, I would largely agree that this would be a major player in progressing our use of evidence but I don’t think it is the final solution. There is still plenty of work to be done with regards to the science we have adopted and the methods we use to make, for example, causal claims. In fact, the only statement you have made which I am not entirely comfortable with is “In terms of assessing effectiveness and efficacy in isolation, the evidence hierarchy is appropriately structured”. I would say the hierarchy is appropriately structured to represent the relative epistemological value of methods in their ability to establish statistical claims of differences between frequency distributions (in line with Adam LaCaze, e.g.http://www.tandfonline.com/doi/abs/10.1080/02691720802559438#.UtUZpfRdWu8 ). But this is different to their relative value and ability in informing future clinical decisions (e.g. http://onlinelibrary.wiley.com/doi/10.1111/j.1365-2753.2012.01908.x/abstract ). However, the only way to develop in this dimension is to continue critically thinking as well, so maybe you ARE completely correct!

Great post Kyle, and again you have given us the chance to reflect and meta-cognate on our own thoughts. Thanks.

Is anyone familiar with the book “Rational Diagnosis and Treatment” by Henrik Wulff? Both Sackett and Gordon Guyatt have given the impression that this book is what led to the formalization of the principles of EBM. Wulff was a professor of mine here in Copenhagen. The main point of the book is that Bayesian inference is used to establish evidence – in other words, what EBM is about is the statistical testing of hypotheses. If you haven’t made a clinical decision based on some statistically tested theory, then you haven’t made an EBM-based decision. Sometimes we cannot find the evidence, it doesn’t exist, or it is flawed. So we do as well as we can. But it is just a probabilistic statement, as is consistent with the scientific method. The RCT is the best way to infer causality. Its weakness is that it is heavily dependent on the validity of the outcome measures employed and the quality of data acquisition, two extremely overlooked threats to RCT validity. I can’t really differentiate between EBM and SBM.

A nice post with video http://mattlowpt.wordpress.com/2014/02/02/tensions-and-advances-in-ebp/

Another great thinking resource from Science Based Medicine. Book mentioned sounds fantastic. Thanks @SandyHiltonPT for sharing….

http://www.sciencebasedmedicine.org/how-to-think/

Our Brains: Predictably Irrationally (on Ted)

“We are not good at reasoning with uncertainty.”

http://www.ted.com/talks/peter_donnelly_shows_how_stats_fool_juries.html

My 10 Commandments of Physiotherapy by Adam Meakins @AdamMeakins

“Commandment No 10: Read and debate more, a lot more!”

Excellent post by Allen Besselink @Abesselink

Evidence Based Practice, Or How the Three Legged Stool Fails Us

“Scientific Plausibility: Does the treatment phenomenon proposed actually obey the laws of physics and have the scientific potential to exist in the world as we know it? I know that my chi is probably a little crooked, but so what? I was once told that using a foam roller created “elasticity” in the tissues; I am just not sure that science (nor collagen) would agree. If it doesn’t obey science, then “Clinical Expertise” just isn’t good enough – so put it to rest, please.

Anatomic Plausibility: Does the theory hold when you look at it from an anatomical perspective? My shifting cranial sutures demand anatomic plausibility. And then there is the iliotibial band. Are you really able to stretch this? Can you do so with one set of three 30 to 60 second stretches of dense connective tissue oh, every couple of days or before and after activity? Hell no. Don’t believe me? Get into an anatomy laboratory at your nearest university and grab one – then let me know what you think.

Critical Thinking: Critical thinking and clinical reasoning are founded on an adherence to the scientific method. Beliefs must be left at the door. Please, no maintaining of contradictions based on logical fallacies. For example, we have plenty of data to confirm that the inter-rater reliability of many manual techniques – including palpation – is horrendous. Yes, we’ve had it for decades. Yet we continue to allow ourselves the liberty of using these tools repeatedly to “confirm” a diagnosis.

That three-legged stool of evidence-based practice is only as good as the foundation upon which we rest it. So let’s get back to science and build a foundation that will truly support that stool. If we do, the stool can – and will – stand tall on its own.”

Erik Meira @ErikMeira via PT Inquest discussing Treatment Fallacies

Roger Kerry asks some serious questions in Evidence-Based Physiotherapy: A Crisis in Movement

Effectiveness vs. Efficacy by Kenny Venere of Physiological

Lessons From Dunning-Kruger by Steven Novella

Harriet Hall of Science Based Medicine on Why We Need Science

Complexity and the health care professions

Great post – sorry it has taken me so long to find this! Much of this resonates quite well with what I have been writing about with my blog on knowledge based practice. Rather than “explanatory models” I refer to “causal structure” being representative of our practice knowledge and that we need causal models to represent that structure. Like you, I completely agree that an integration between how we develop that knowledge and how we use that knowledge in practice is required. Not simply a translation from evidence to knowledge, but an integration of the processes with explicit consideration for the reasoning and logic behind that integrated process. I have found that the approach of emphasizing causal structure, models and inference resonates with students – here is a post from two of my students: https://knowledgebasedpractice.wordpress.com/2015/04/26/causal-model-considerations-for-entry-level-dpts/

While we must acknowledge the propensities of the human mind to be biased, we must not degrade human mental capacity too much otherwise we degrade the entire enterprise of pursuing knowledge and truth. It is clear you understand that and I do not believe your words regarding human mental frailties are inclined to result in skepticism (i.e. but if we have completely biased minds how do we know we are not biased about even what we believe is rational, critical, logical thinking?).

You say: “Robust conclusions on causation can not be made via less controlled experiments.” Depending on what you mean by “controlled” I do not necessarily agree with this – control is a great way to rule out alternative explanations, but there are many criteria that can be utilized to develop a well justified case for a causal association. The fact is that there are many possible explanations for a pattern of data and control is how we attempt to limit those explanations. However, everything about a study is control, not just having a control group. Our observations are controlled so long as we take time to know the constraining controls in place at the time of our observations and how they would impact the resultant data. Epidemiologists have spent a long time developing rigorous methods to make causal claims based on observational studies. So, if by controlled you mean you need an experimental design and a control group to make a robust causal claim I disagree.

I completely agree with this statement “But, the evidence hierarchy does not consider knowledge from other fields nor basic science, and thus by structure explicitly ignores plausibility in both theory and practice. To be fair, plausibility does not necessarily support efficacy nor effectiveness.” The hierarchy also only focuses on the limited set of questions that can be asked within on study or review of studies, which is what you mean earlier (I think) by not explicitly considering the prior knowledge (one of your commenters (Jonathan Comins) mentions Bayes theorem, great reference, very relevant to this point). This leads to a need to “translate” from isolated sets of information to the broader more variable, larger multi-causal structures required for practice. And the evidence hierarchy, nor EBP provides for that process. As mentioned earlier, I think we would agree that should be integrated into the system, not simply translation. In my blog I provide further discussion about this through the use of graphical causal models: https://knowledgebasedpractice.wordpress.com/2015/01/29/graphical-causal-models/

“So, it is still imperative, and absolutely necessary, to learn the methodology of clinical science.” Yes, at least how to get from a clinical study to a causal model and adapt existing causal models based on new findings. There should be a repository of causal models available for clinicians, researchers and educators to collaborate on, develop, modify, etc. https://knowledgebasedpractice.wordpress.com/2015/04/22/introducing-the-physical-therapy-dags-repositories/

“Physical therapy practice is prone to the observation of effect followed by a theoretical construct (story) that attempts to explain the effect.” Yes, and this will (and should) continue to be part of our epistemological approach, it can be formalized, Peter Lipton describes the process as: Inference to the Best Explanation in his book named as such; and C. S. Peirce was discussing an earlier version at the start of the 20th century (abduction, reasoning from observed effects to their possible causes). The question is how to formalize for physical therapy based on the stratification of reality and the various ontologies we must deal with; and how to integrate that process of discovery and knowledge formation with inductive approaches to build our explanatory (I would say causal) models.

Science Based Medicine seems a better model than EBP (includes all that EBP includes with additional considerations); but I still do not see SBM as including an explicit integration between evidence, knowledge and practice as KBP is attempting with the explicit consideration of dynamic inference (induction, deduction and abduction), or a practical methodology for such an integration such as provided with the actual development and use of graphical causal models.

So, perhaps I am biased, but I continue to believe that what I am promoting as KBP (https://knowledgebasedpractice.wordpress.com) is offering you a more robust system / approach based on your concerns than Science Based Medicine. KBP seems to include what SBM includes, which logically entails that it would include all that EBM includes since EBM is a subset of SBM and SBM is a subset of KBP. Of course, KBP might be too encompassing to cohere and be anything….. 😉

The Engineering Mindset by Shane Parrish of Farnam Street